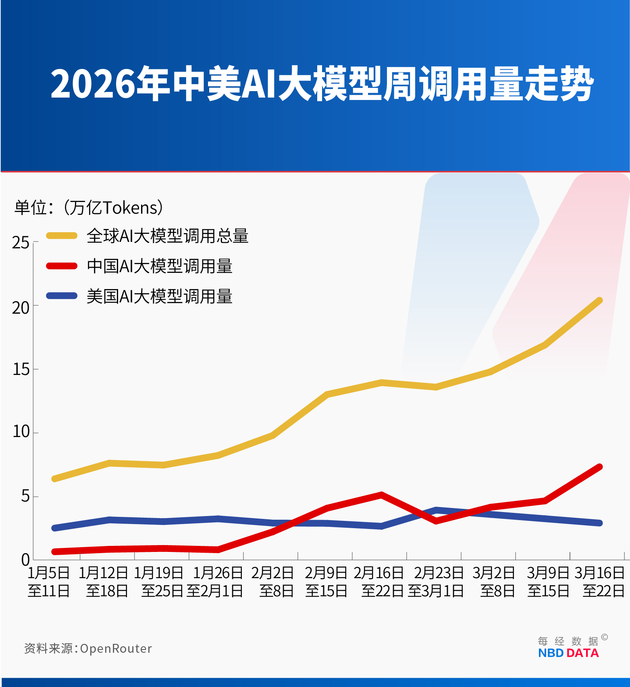

Based on the latest data from OpenRouter, National Business Daily (NBD) noticed that global AI large model usage reached 20.4 trillion tokens in the week of March 16–22, up 20.7% week-over-week.

Among them, Chinese AI models recorded 7.359 trillion tokens, a sharp increase of 56.91% from the previous week, while U.S. models declined 10.32% to 2.954 trillion tokens. This marks the third consecutive week that Chinese models have surpassed their U.S. counterparts in total token usage.

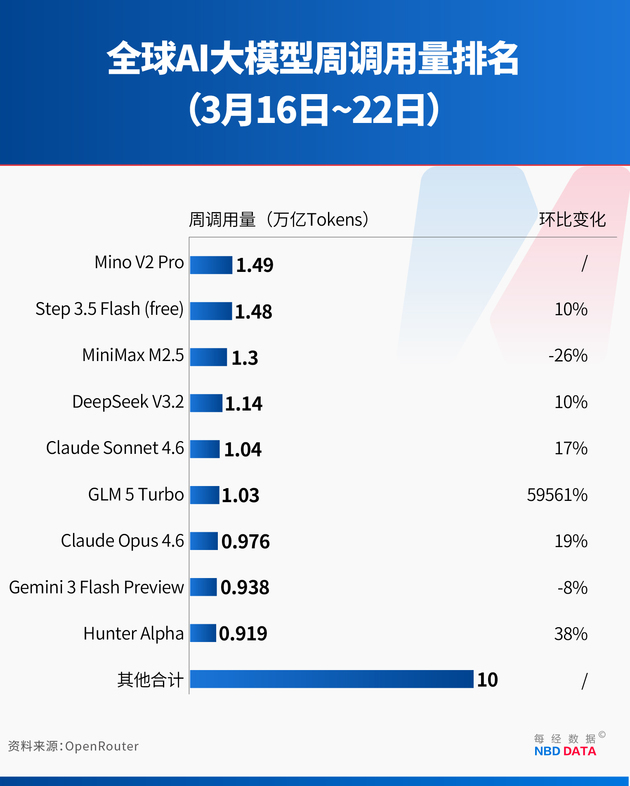

Notably, the top four models by global token consumption last week were all from China. Xiaomi’s Mino V2 Pro ranked first with 1.49 trillion tokens, followed closely by StepFun’s Step 3.5 Flash (free) at 1.48 trillion tokens, up 10% week-over-week. MiniMax M2.5, after holding the top spot for five consecutive weeks, slipped to third with 1.3 trillion tokens, down 26%. DeepSeek V3.2 ranked fourth with 1.14 trillion tokens, up 10%.

China Tops the U.S. In Terms of Weekly Token Usage

February marked a turning point. For the first time, Chinese models surpassed U.S. models in weekly token usage.

Data from OpenRouter, the world’s largest AI model API aggregation platform, shows that during the week of February 9–15, Chinese models reached 4.12 trillion tokens, exceeding U.S. models at 2.94 trillion. The following week (February 16–22), China’s total climbed further to 5.16 trillion tokens, representing a 127% increase over three weeks, while U.S. usage fell to 2.7 trillion.

At the same time, four of the top five models globally were from China, highlighting a broad-based rise rather than reliance on a single breakout product.

Token consumption—the smallest unit of text processed by AI models—is widely regarded as a more accurate indicator of real usage intensity, user engagement, and commercial value than user counts.

From Marginal Presence to Dominance

OpenRouter aggregates hundreds of large language models and serves over five million developers, making its API usage data a key barometer of real-world adoption. Importantly, its user base is predominantly overseas, with U.S. developers accounting for 47.17% and Chinese developers just 6.01%, lending credibility to the global competitiveness reflected in the rankings.

Over the past year, token usage has grown explosively. In early March 2025, the top ten models on the platform collectively generated just 1.24 trillion tokens per week. By mid-February 2026, that figure had surged to 13.95 trillion—more than a tenfold increase.

While U.S. models accounted for nearly 70% of usage among the top ten in 2025, compared to less than 20% for Chinese models, the trend reversed in 2026. By early February, Chinese models had already reached 2.27 trillion tokens per week, signaling strong momentum. Within a week, they overtook the U.S., and continued to widen the lead.

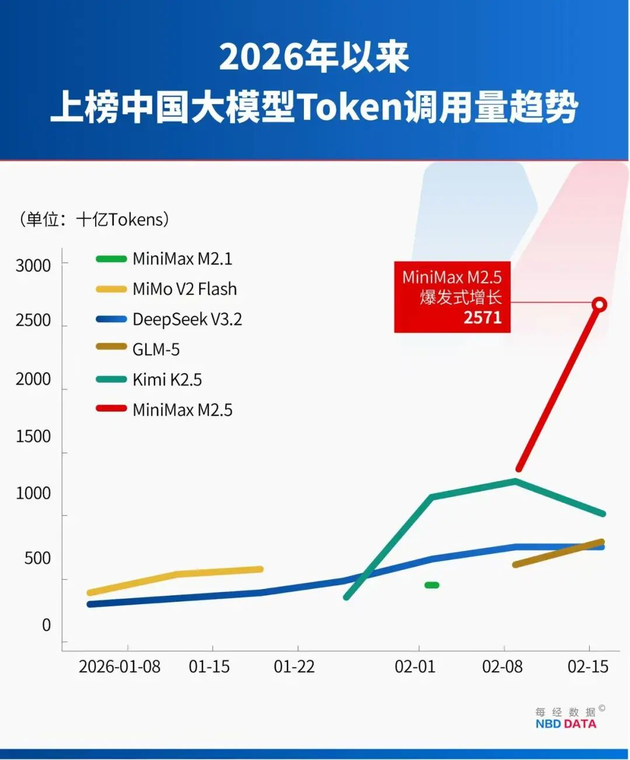

This growth has been driven by a cluster of Chinese AI firms rather than a single dominant player.

Clustered Rise of Chinese Models

During the week of February 16–22, four Chinese models—MiniMax M2.5, Moonshot AI’s Kimi K2.5, Zhipu AI’s GLM-5, and DeepSeek V3.2—accounted for 85.7% of total token usage among the top five models.

MiniMax’s M2.5, launched on February 13, rapidly climbed to the top of the rankings within a week, contributing 1.44 trillion tokens to the platform’s 3.21 trillion token increase during February 9–15 alone.

Kimi K2.5, released on January 27, saw rapid growth driven by its native multimodal architecture and parallel agent capabilities, which allow up to 100 agents to operate simultaneously, improving task efficiency by 3 to 10 times. Within a month of its release, its cumulative revenue reportedly exceeded the company’s total revenue for all of 2025.

Zhipu AI’s GLM-5, launched on February 12, gained traction due to its 200K context window and optimization for long-horizon agent tasks, reaching 0.8 trillion tokens in its second week.

Although Alibaba’s Qwen models appeared less frequently in weekly rankings, a joint report by Andreessen Horowitz (a16z) and OpenRouter shows that its full model family reached 5.59 trillion tokens in total usage, ranking second globally behind DeepSeek at 14.37 trillion.

Cost Advantage: A Decisive Factor

A key driver behind the rapid adoption of Chinese models is their significant cost advantage.

On OpenRouter, the input price for MiniMax M2.5 and GLM-5 is $0.30 per million tokens, compared to $5 for Anthropic’s Claude Opus 4.6—roughly 16.7 times higher. For output, M2.5 costs $1.10 per million tokens and GLM-5 $2.55, while Claude Opus 4.6 reaches $25, making it up to 22.7 times more expensive.

This pricing gap strongly influences developers’ choices.

The cost advantage is largely driven by architectural innovation, particularly the adoption of Mixture-of-Experts (MoE) models. MoE structures divide large models into multiple smaller “expert” networks, activating only a subset for each task. Compared to dense models, this reduces memory usage by 60% and increases throughput by up to 19 times.

In addition, Chinese companies are pursuing vertical integration across algorithms, cloud infrastructure, and AI chips to further optimize efficiency and reduce costs.

From Traffic to Fuel: The Changing Value of Tokens

The rapid rise in token consumption reflects a fundamental shift in how AI is used. Rather than simple Q&A tools, AI systems are increasingly integrated into workflows as productivity tools.

A recent report by Guolian Minsheng Securities describes this phenomenon as “token inflation”—not rising prices, but increasing token consumption per user and per unit time.

This trend is driven by three factors: the shift from lightweight queries to complex task execution (such as coding and document generation), the rise of AI agents that perform multi-step processes, and the growing use of deep reasoning, all of which significantly increase token usage.

As a result, tokens are no longer akin to low-cost internet “traffic,” but rather the “fuel” powering AI-driven production.

This aligns with Nvidia CEO Jensen Huang’s view that “compute is revenue” and “inference is revenue.” Without compute, tokens cannot be generated; without tokens, revenue cannot grow.

Photo/AIGC

Toward a New Pricing Paradigm

Looking ahead, AI pricing models are expected to evolve from simple usage-based billing toward hybrid models that combine “fuel” (tokens) and “outcomes.”

While token prices are likely to decline due to technological progress and economies of scale, enterprises will increasingly pay for results as AI systems deliver measurable productivity gains.

In the agent era, pricing will also become more dynamic and multi-dimensional, incorporating factors such as computational intensity, frequency of calls, and task complexity.

川公网安备 51019002001991号

川公网安备 51019002001991号