Photo/Liu Xuemei (NBD)

After half a year of internal strife, OpenAI’s team dedicated to “defending humanity” has fallen apart. Not only did Chief Scientist Ilya and his confidant Jan Leike resign this week, but the “Superintelligence Alignment Team (AI Risk Team)” they co-led was also confirmed to be disbanded on Friday.

On Tuesday, May 14th, Eastern Time, OpenAI announced that its Chief Scientist and Co-founder Ilya Sutskever would be leaving the company, with Research Director Jakub Pachocki taking over his position.

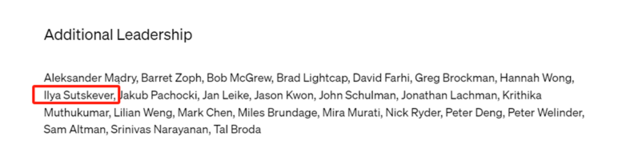

Since the end of OpenAI’s internal conflict last November, Sutskever has rarely made public appearances, and there has been no news about his position after the company’s board adjustments. On the newly released homepage of GPT-4o by OpenAI, Sutskever’s name appears in the “Other Leaders” section.

Photo/OpenAI

Sutskever also announced his resignation decision on the X platform, accompanied by a photo. In the picture, he is lined up with his successor Pachocki, Co-founder Greg Brockman, CEO Sam Altman, and CTO Mira Murati, marking this historic moment.

Photo/X

Regarding his future plans, Sutskever stated in his X post that he would develop a brand new meaningful project and disclose more details when appropriate. Some speculate that he might join Musk’s xAI, although it’s dramatic, the possibility is not excluded. Others guess that his new project is still related to generative AI products like ChatGPT and might be an open-source project, considering his master status in the field.

Born in the Soviet Union in 1985 and raised in Israel, Sutskever speaks Russian, Hebrew, and English. During his studies at the University of Toronto, he was mentored by “the father of modern artificial intelligence” Geoffrey Hinton and collaborated with him to develop AlexNet, a neural network that significantly advanced deep learning technology in image recognition.

In 2015, at Musk’s invitation, Sutskever joined OpenAI as part of the founding team, playing a key role in the development of the ChatGPT language model and the Dall-E image generator. The New York Times reported that after joining OpenAI, Sutskever participated in AI breakthroughs involving neural networks, which have significantly advanced the field over the past decade. In 2023, Sutskever was named one of the top ten scientists of the year (Nature’s 10) by Nature, hailed as “a pioneer of ChatGPT and other AI systems that change society.”

However, in a rare interview with MIT Technology Review last October, Sutskever expressed that he did not intend to build the next GPT or image generation model DALL-E, but rather aimed to figure out how to prevent superintelligent AI from becoming uncontrollable. As a futurist, he believes that this still hypothetical future technology will eventually emerge.

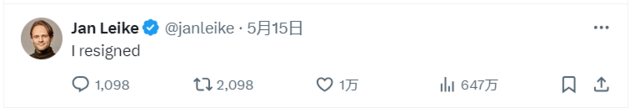

Hours after Sutskever announced his departure, Ilya’s confidant and one of the leaders of OpenAI’s Superalignment Team, Jan Leike, also announced his resignation on the X platform. Additionally, on Friday, OpenAI confirmed that the “Superintelligence Alignment Team” led by Ilya and Jan Leike had been disbanded. The team’s research work will be integrated into other research groups within OpenAI.

Photo/X

In September 2023, Leike was named one of the 100 most influential people in the field of artificial intelligence by Time magazine. On Friday, Leike publicly revealed some of the reasons for his departure on X. He had long-standing disagreements with OpenAI’s senior management on the company’s core priorities. The team faced significant obstacles in advancing their research projects and securing computing resources. Building superintelligent machines is inherently dangerous, and OpenAI carries a heavy responsibility for all of humanity. However, in recent years, safety culture and processes have given way to products.

Musk commented on the dissolution of OpenAI’s Superalignment Team, stating: “This shows that safety is not OpenAI’s top priority.”

The consecutive departures of Sutskever and Leike are just part of the recent turmoil within the OpenAI team. According to public statements and media reports, nine executives and employees have resigned from OpenAI since the beginning of this year.

As reported by The Information, Diane Yoon, Vice President of Human Resources at OpenAI, and Chris Clark, the person in charge of non-profit and strategic planning, resigned a few weeks ago. In April, researchers Leopold Aschenbrenner and Pavel Izmailov, who had previously worked on the Superalignment Team, also left OpenAI. In February, Andrej Karpathy, one of the founding members and an AI technology researcher at the company, announced his departure to focus on personal projects.

川公网安备 51019002001991号

川公网安备 51019002001991号