Photo/Liu Guomei (NBD)

On November 17, local time, Sam Altman, the founder and CEO of OpenAI, was suddenly dismissed by the company’s board of directors. This unexpected personnel change shocked the world. Eleven days ago, Altman was still on the stage of the first OpenAI Developer Conference, unveiling the mystery of GPTs and “blowing up” the AI circle.

After Altman was dismissed, Greg Brockman, the co-founder and president of OpenAI, announced his departure from the company. On the evening of the 17th, three senior researchers from OpenAI also resigned.

On November 30 last year, ChatGPT emerged, and Altman and OpenAI frequently occupied the headlines of the media. The public did not expect that in less than a year, internal strife and division would be rampant in this technology star, and the core team would fall apart at this moment.

However, according to the latest report from The Verge on November 19, several informed sources revealed that the OpenAI board of directors is discussing with Altman the matter of returning to the company as CEO. One of the sources said that Altman was suddenly fired before, and he felt “conflicted” about returning, and hoped to make major changes to governance.

Why was Altman so easily kicked out? If Altman really leaves, will OpenAI’s path to commercialization stop? Where will this star company go?

The board considers letting Altman return

According to the latest report from The Verge on November 19, several informed sources revealed that the OpenAI board of directors is discussing with Altman the matter of returning to the company as CEO. One of the sources said that Altman was suddenly fired before, and he felt “conflicted” about returning, and hoped to make major changes to governance.

Just two days ago, Altman was abruptly dismissed, and his close friend Brockman soon announced his resignation as chairman of the OpenAI board. According to The Information, three senior researchers from OpenAI also resigned on the evening of the 17th, including research director Jakub Pachocki, AI risk analysis team leader Aleksander Madry, and researcher Szymon Sidor, who had worked at OpenAI for seven years, two of whom expressed support for Brockman.

The Verge reported that Altman had a meeting with the company only one day after being ousted, which indicated that OpenAI was in free fall without him. People close to OpenAI said that in addition to the senior researchers who resigned together that day, more resignations were brewing. In addition, Altman and Brockman had been discussing with friends and investors the matter of founding another company after being out of the game. If Altman decides to leave and start a new company, those employees will definitely go with him.

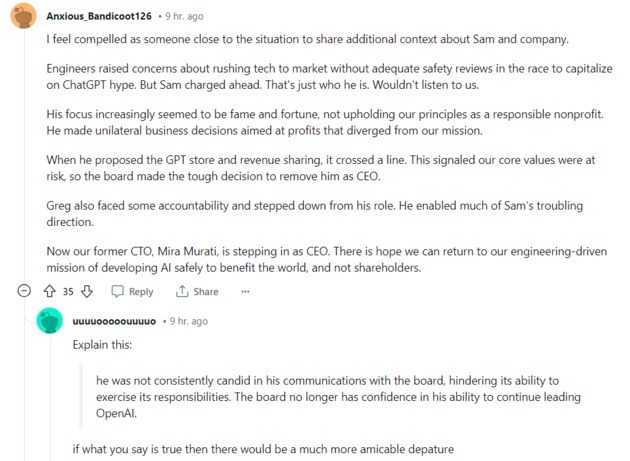

According to OpenAI’s statement, the reason for Altman’s dismissal was due to a special investigation launched by the board of directors earlier, which concluded that Altman was not completely honest in the communication process with the board of directors, and the board lost confidence in his continued leadership of the company, and decided to have the company’s chief technology officer Mira Murati replace Altman as the company’s interim CEO. From Altman and Brockman’s posts on the X platform, both of them were temporarily aware of this decision, which means that there was a split within OpenAI.

On November 7, OpenAI took a new step in commercialization. Altman announced the launch of a simple custom GPT tool (GPTs) at OpenAI’s first developer conference, which was interpreted as his desire to create an Apple-like grand AI ecosystem. The developers who flocked in crowded the servers, and in just one week, the number of GPTs reached 15,000. On the 15th, Altman announced that he would suspend the registration of new users for ChatGPT Plus. This seemed to be the fuse that triggered the personnel upheaval.

Bloomberg said that Altman’s dismissal was due to his disagreement with the board of directors on AI safety, technology development speed, and company commercialization issues, especially with OpenAI’s other co-founder and chief scientist Ilya Sutskever, who is one of the core figures of OpenAI’s series of technological breakthroughs.

The Information revealed the details of Altman’s dismissal. At noon on the 17th, Sutskever had a video call with the other members of the board of directors except Altman and Brockman, and the board voted to dismiss Altman.

A few minutes later, in another video call, Brockman was informed that he was removed from the board, but he could keep his position. After that, OpenAI issued a statement.

The original members of the OpenAI board of directors included Altman, Brockman, Sutskever, Quora CEO Adam D’Angelo, technology entrepreneur Tasha McCauley, and Helen Toner of Georgetown University’s Center for Security and Emerging Technology. That is to say, the latter four members voted to make the dismissal decision.

The Information obtained the record of the temporary general meeting held by OpenAI after Altman’s dismissal, and two employees asked Sutskever whether the dismissal constituted a “coup”. Sutskever denied and said, “I can understand why you choose this word, but I disagree.” He also emphasized, “This is the board’s duty to fulfill the mission of the non-profit organization, that is, to ensure that OpenAI builds AGI that benefits all mankind.”

When asked “Is this the best way to manage this company”, he answered: “To be fair, I admit that there are some undesirable factors.”

Friction started a month ago: AI safety became the focus

From the background of the four board members who voted to oust Altman, they are all leaders of the technical route and also hold AI safety-related responsibilities. AI safety is an important reason for this personnel change.

As Sutskever emphasized the company’s purpose of building AGI that benefits all mankind at the temporary meeting, the OpenAI statement also mentioned that the board must avoid “enabling AI or AGI that harms humanity or excessively concentrates power”, and ensure that “AGI research is safe”, and the board’s main responsibility is “for humanity”.

The Information revealed that before Altman was dismissed, there had also been debates on AI safety issues within OpenAI. After Altman was dismissed, Murati sent a memo to employees, saying that the company’s three pillars are “to advance the research plan as much as possible, safety and coordination work - especially scientific prediction of AI capabilities and risks, and sharing technology with the world in a way that benefits everyone.”

In fact, Sutskever often talks about AI safety. In a documentary “iHuman” in 2019, he said: “Anyway, the future of AI will be beautiful; but if it is also good for humans, that would be great.” In an interview in July this year, he said he was most worried about the dangers of powerful AGI in the next few years.

In July this year, Sutskever and OpenAI researcher Jan Leike formed a new team, dedicated to researching technical solutions to prevent AI system anomalies. OpenAI said in a blog post that it would invest one-fifth of its computing resources to address the threat of AGI.

But according to Bloomberg, a month ago (October), he had a friction with Altman and Brockman, and Sutskever was stripped of power. Subsequently, Sutskever appealed to the board and won the support of some directors, including Helen Toner. This opened the road of internal strife and division of OpenAI.

Since OpenAI was created, AI safety has always been its focus. The concern is that powerful models may be abused by malicious people, such as developing biological weapons, generating convincing deep fakes, or invading critical infrastructure. If AI goes out of control, it may come at the expense of human sacrifice.

In 2015, Altman, Sutskever, Musk and others jointly founded the non-profit organization OpenAI to counterbalance Google and other AI profit-making companies. At that time, they had a consensus, to avoid the motive of AI profit at the expense of social security, which was also an important consideration for their recruitment of employees for many years.

Later, internal differences emerged. In 2020, due to concerns about AI safety and inconsistency in commercialization, a group of employees left and created OpenAI’s competitor Anthropic. Dario Amodei, one of the founders of Anthropic, said in an interview with Forbes that he left OpenAI to create a more trustworthy large model; as early as 2018, Musk parted ways with OpenAI for the same reason.

Now, under Altman’s strategizing, OpenAI is developing rapidly, but also facing security accusations, especially data issues. Earlier this year, the Italian data protection authority accused OpenAI of violating the EU’s data protection law, and the case is still under trial. In July, the US Federal Trade Commission began investigating whether OpenAI harmed people’s interests by publishing false information, and whether there were “unfair or deceptive” privacy and data security behaviors.

Behind the scenes: a carefully designed company structure

As the CEO and board member of OpenAI, why was Altman so easily kicked out? This has to do with OpenAI’s organizational structure.

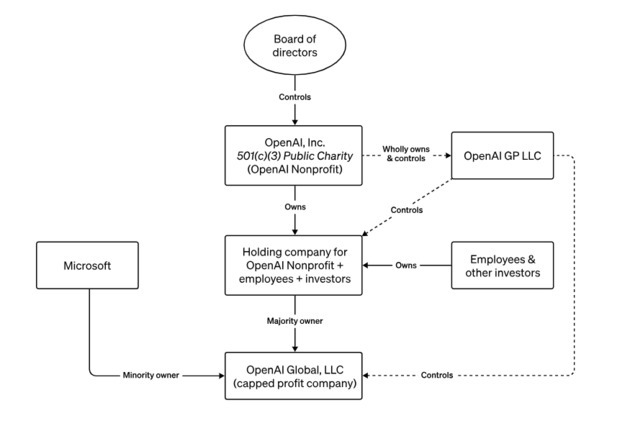

In 2019, Altman created a for-profit entity - OpenAI LP (limited partner), which was later transformed into a for-profit subsidiary OpenAI Global, managed by the OpenAI non-profit organization. Microsoft invested in OpenAI’s for-profit subsidiary OpenAI Global.

According to the OpenAI website, OpenAI Global is controlled by the OpenAI non-profit organization, which is achieved by wholly owning and controlling a management entity OpenAI GP LLC. Most of the members of the OpenAI board of directors are independent directors, and independent directors do not hold shares of OpenAI.

Image source: OpenAI website

In order to avoid falling into the greedy profit black hole, Altman proposed a restrictive solution at that time - setting a theoretical upper limit on the profits created by the company for its principals and investors.

According to OpenAI’s 2019 statement, for the first batch of investors, this upper limit is 100 times, and subsequent investors will be less. If the return exceeds this upper limit, the excess part will be controlled by the non-profit organization.

Image source: OpenAI website

Only a few of OpenAI’s board members are allowed to hold shares of OpenAI LP, and if there is a conflict between the limited partner and the company’s mission, the board members who do not hold shares will vote to make a decision. This also foreshadowed why Altman was so easily kicked out today.

In fact, at OpenAI, Altman did not have any equity either. He claimed that the reason was that he was rich enough and did not need more money returns. He was the president of the Silicon Valley incubator Y Combinator, known as the angel investor of Silicon Valley startups. In the past decade, Altman has made a lot of investments in many other companies, including about 400 investments in startups such as Reddit, Stripe, Asana, Cerebras and Humane.

On November 9, Humane released an AI hardware called AI Pin, which is a miniature device that can be attached to clothes and has a built-in GPT large model. This is another ambition of Altman outside of OpenAI, and he is the largest shareholder of this company, holding 14% of the shares.

According to foreign media, most of his net worth is related to the equity of private companies, so it is difficult to determine his real net worth, estimated at 500 million to 700 million US dollars. In 2019, he said in an interview that he was paid a salary at OpenAI, 65,000 US dollars a year.

Commercialization end? Analyst: no fundamental changes in direction

After this huge change, the outside world speculated that if Altman was gone, would OpenAI’s path to commercialization stop? Where will this star company go?

Rowan Curran, an AI analyst at Forrester, said that from the official wording of OpenAI, and the confidence shown by the major shareholder Microsoft to the company in recent weeks, Altman’s departure was related to his personal issues, rather than OpenAI’s huge business having problems. “I see this change as a CEO personnel adjustment of a large technology company, but I don’t think OpenAI’s methods, direction and technology have fundamentally changed.” He said.

Thomas Hayes, chairman of Great Hill Capital, said, “In the short term, this will weaken OpenAI’s ability to raise more funds. But in the medium term, this is not a problem.”

In addition, some analysts believe that from a business perspective, this is a heavy blow to OpenAI, and the company has lost the opportunity to build a closed loop and ecosystem like Apple’s dominance. But from a safety perspective, maybe it’s a good thing.

After Altman was dismissed, the speculation that Microsoft wanted to gain control of OpenAI was rampant, but from Microsoft’s statement, the company was not aware. And Microsoft is not among the members of the OpenAI board of directors.

Microsoft responded on the 17th that it will continue to support OpenAI’s new CEO. Microsoft posted a statement from CEO Nadella on its official website. “We have signed a long-term agreement with OpenAI… We will work together to continue to bring the great benefits of AI to the world.”

According to the New York Times, Microsoft currently holds 49% of OpenAI. Some analysts believe that with Altman’s departure, Microsoft may have more say in OpenAI’s future development.

Daniel Ives, an analyst at Wedbush Securities, said that OpenAI’s momentum is unlikely to slow down as a result. “This is shocking, he is the key element of OpenAI’s success. We believe that after Altman leaves, Microsoft and Nadella will exert more control over OpenAI.” He said.

川公网安备 51019002001991号

川公网安备 51019002001991号